Organizers

NeurIPS 2023

Tristan Naumann is a Principal Researcher in the Real World Evidence (RWE) group at Microsoft Research’s Health Futures. His research focuses on problems at the intersection of machine learning (ML) and health, specifically exploring relationships in complex, unstructured health data using techniques from natural language processing (NLP) and unsupervised learning. He values supporting the broader ML community through academic service and has served as a General Chair, and a variety of other roles, for NeurIPS, AHLI Conference on Health, Inference, and Learning (CHIL), and Machine Learning for Health (ML4H).

Alice Oh

I am a professor at KAIST in the School of Computing with joint appointment in the Graduate School of AI. My research interests are in developing and applying machine learning models for natural language processing. In our research group, we look at various data such as news, social media, Wikipedia, and programming education.

Amir Globerson

Moritz Hardt

Moritz Hardt is a director at the Max Planck Institute for Intelligent Systems. Prior to joining the institute, he was Associate Professor for Electrical Engineering and Computer Sciences at the University of California, Berkeley. Hardt's research contributes to the scientific foundations of machine learning and algorithmic decision making with a focus on social questions. He co-authored the textbooks "Patterns, Predictions, and Actions: Foundations of Machine Learning" and "Fairness and Machine Learning: Limitations and Opportunities".

Sergey Levine

Sergey Levine received a BS and MS in Computer Science from Stanford University in 2009, and a Ph.D. in Computer Science from Stanford University in 2014. He joined the faculty of the Department of Electrical Engineering and Computer Sciences at UC Berkeley in fall 2016. His work focuses on machine learning for decision making and control, with an emphasis on deep learning and reinforcement learning algorithms. Applications of his work include autonomous robots and vehicles, as well as applications in other decision-making domains. His research includes developing algorithms for end-to-end training of deep neural network policies that combine perception and control, scalable algorithms for inverse reinforcement learning, deep reinforcement learning algorithms, and more

Kate Saenko

Kate is an AI Research Scientist at FAIR, Meta and a Full Professor of Computer Science at Boston University (currently on leave) where she leads the Computer Vision and Learning Group. Kate received a PhD in EECS from MIT and did postdoctoral training at UC Berkeley and Harvard. Her research interests are in Artificial Intelligence with a focus on out-of-distribution learning, dataset bias, domain adaptation, vision and language understanding, and other topics in deep learning. Past academic positions Consulting professor at the MIT-IBM Watson AI Lab 2019-2022. Assistant Professor, Computer Science Department at UMass Lowell Postdoctoral Researcher, International Computer Science Institute Visiting Scholar, UC Berkeley EECS Visiting Postdoctoral Fellow, SEAS, Harvard University

Yarin Gal

Piotr Koniusz

An Associate Professor in Theoretical ML at the University of New South Wales (UNSW, Sydney). A Principal (now Visiting) Research Scientist in Machine Learning at Data61❤CSIRO and a Honorary Associate Professor at the Australian National University (ANU). Between 2013-2015, he was a postdoctoral researcher in the team LEAR, INRIA, Grenoble. He received his BSc in Telecommunications and Software Engineering in 2004 from the Warsaw University of Technology, Poland, and completed his PhD in Computer Vision in 2013 at CVSSP, University of Surrey, UK. His current interests include contrastive and few-shot learning. He has received awards such as the Sang Uk Lee Best Student Paper Award from ACCV'22, the Runner-up APRS/IAPR Best Student Paper Award from DICTA'22, outstanding Area Chair by ICLR 2021--2023. He has served as a Workshop Program Co-Chair for NeurIPS'23 and WWW'25, and Senior Area Chair for several NeurIPS, ICLR, ICML and AISTATS conferences.

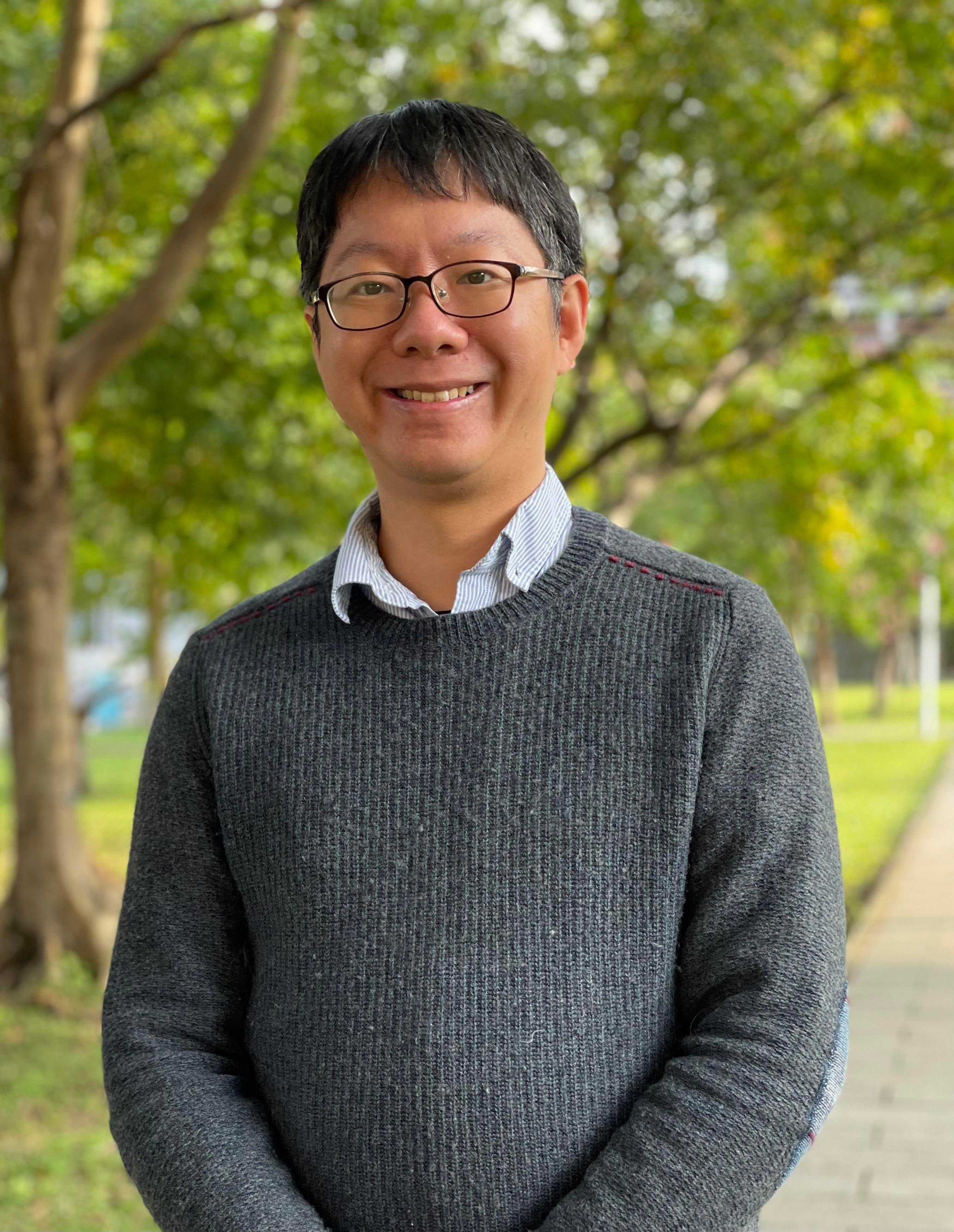

Hsuan-Tien Lin

Professor Hsuan-Tien Lin received a B.S. in Computer Science and Information Engineering from National Taiwan University in 2001, an M.S. and a Ph.D. in Computer Science from California Institute of Technology in 2005 and 2008, respectively. He joined the Department of Computer Science and Information Engineering at National Taiwan University as an assistant professor in 2008 and has been promoted to full professor in 2017. Between 2016 and 2019, he worked as the Chief Data Scientist of Appier, a startup company that specializes in making AI easier for marketing. Currently, he keeps growing with Appier as its Chief Data Science Consultant. From the university, Prof. Lin received the Distinguished Teaching Awards in 2011 and 2021, the Outstanding Mentoring Award in 2013, and five Outstanding Teaching Awards between 2016 and 2020. He co-authored the introductory machine learning textbook Learning from Data and offered two popular Mandarin-teaching MOOCs Machine Learning Foundations and Machine Learning Techniques based on the textbook. He served in the machine learning community as Progam Co-chair of NeurIPS 2020, Expo Co-chair of ICML 2021, and Workshop Chair of NeurIPS 2022 and 2023. He co-led the teams that won the champion of KDDCup 2010, the double-champion of the two tracks in KDDCup 2011, the champion of track 2 in KDDCup 2012, and the double-champion of the two tracks in KDDCup 2013.

Ismini Lourentzou

Ismini Lourentzou is an Assistant Professor at Virginia Tech's Computer Science Department, where she leads the Perception and LANguage (PLAN) Lab. She is a core faculty member of the Sanghani Center for Artificial Intelligence and Data Analytics, an affiliate faculty of the National Security Institute (VT NSI), and an affiliate faculty of the Center for Advanced Innovation in Agriculture (VT CAIA). Her primary research focus is multimodal machine learning, particularly the intersection of vision and language in settings with limited supervision, and its applications in embodied AI, video understanding, healthcare, etc. Lourentzou obtained her Ph.D. in Computer Science from the University of Illinois at Urbana - Champaign and has previously worked as a Research Scientist at IBM Research. She has served as Expo Co-Chair of NeurIPS 2022, Workshop Co-Chair of NeurIPS 2023, Associate PC Chair of ACM PETRA 2021 and 2023, Doctoral Consortium Chair of ACM PETRA 2022, and assumed editorial and Area Chair roles for top-tier journals and conferences (ACL'23, MICCAI'23, PLOS Digital Health Computer Vision Section Editor, etc.). Lourentzou received a 2023 Outstanding New Assistant Professor Award from Virginia Tech College of Engineering, a 2019 IBM Invention Plateau, and was also selected as a 2019 EECS Rising Star. Her research has received support from NSF, DARPA, Amazon, and CCI. Currently, Lourentzou’s PLAN Lab competes in the Alexa Prize TaskBot Challenge 2.

Pascal Notin

Saadia Gabriel

Saadia Gabriel is a NYU Faculty Fellow and incoming UCLA Assistant Professor, with a Ph.D. in Computer Science from the University of Washington. Previously she was a MIT Postdoctoral Fellow. Her research revolves around natural language processing and machine learning, with a particular focus on building systems for understanding how social commonsense manifests in text (i.e. how do people typically behave in social scenarios), as well as mitigating spread of false or harmful text (e.g. Covid-19 misinformation). Her work has been covered by a wide range of media outlets like Forbes and TechCrunch. It has also received a 2019 ACL best short paper nomination, a 2019 IROS RoboCup best paper nomination and won a best paper award at the 2020 WeCNLP summit.

Marzyeh Ghassemi

Andrew Gordon Wilson

Jake Albrecht

Dr. Jake Albrecht is the Director of Challenges and Benchmarking at Sage Bionetworks, responsible for managing the strategic and operational activities of benchmarking projects and data challenges, including the DREAM Challenges. Jake has a Ph.D. from MIT and B.S. from the University of Nebraska, both in Chemical Engineering.

Marco Ciccone

Tao Qin

LLMs & agents, AI for Science, protein modeling, DNA/RNA modeling, drug discovery

Remi Denton

Remi Denton (they/them) is a Staff Research Scientist at Google, within the Technology, AI, Society, and Culture team, where they study the sociocultural impacts of AI technologies and conditions of AI development. Prior to joining Google, Remi received their PhD in Computer Science from the Courant Institute of Mathematical Sciences at New York University, where they focused on unsupervised learning and generative modeling of images and video. Prior to that, they received their BSc in Computer Science and Cognitive Science at the University of Toronto.

Though trained formally as a computer scientist, Remi draws ideas and methods from multiple disciplines and is drawn towards highly interdisciplinary collaborations, in order to examine AI systems from a sociotechnical perspective. Remi’s recent research centers on emerging text- and image-based generative AI, with a focus on data considerations and representational harms. Remi published under the name "Emily Denton".

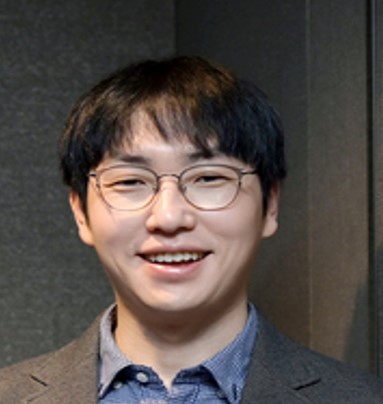

Jung-Woo Ha

- Head, AI Innovation, NAVER Cloud

- Research Fellow, NAVER AI Lab

- Datasets and Benchmarks Co-Chair, NeurIPS 2023

- Socials Co-Chair, ICML 2023

- Socials Co-Chair, NeurIPS 2022

- BS, Seoul National University

- PhD, Seoul National University

Joaquin Vanschoren

Joaquin Vanschoren is Associate Professor in Machine Learning at the Eindhoven University of Technology. He holds a PhD from the Katholieke Universiteit Leuven, Belgium. His research focuses on understanding and automating machine learning, meta-learning, and continual learning. He founded and leads OpenML.org, a popular open science platform with over 250,000 users that facilitates the sharing and reuse of machine learning datasets and models. He is a founding member of the European AI networks ELLIS and CLAIRE, and an active member of MLCommons. He obtained several awards, including an Amazon Research Award, an ECMLPKDD Best Demo award, and the Dutch Data Prize. He was a tutorial speaker at NeurIPS 2018 and AAAI 2021, and gave over 30 invited talks. He co-initiated the NeurIPS Datasets and Benchmarks track and was NeurIPS Datasets and Benchmarks Chair from 2021 to 2023. He also co-organized the AutoML workshop series at ICML, and the Meta-Learning workshop series at NeurIPS. He is editor-in-chief of DMLR (part of JMLR), as well as an action editor for JMLR and machine learning moderator for ArXiv. He authored and co-authored over 150 scientific papers, as well as reference books on Automated Machine Learning and Meta-learning.

Blessing Ogbuokiri

Dr. Blessing Ogbuokiri is an Assistant Professor in the Department of Computer Science at Brock University, Canada, specializing in Machine Learning (ML) and its applications in health. He has extensive experience in ML, Natural Language Processing (NLP), and Theoretical Computing. Dr. Ogbuokiri focuses on developing predictive models for infectious diseases, particularly understanding disease transmission dynamics and evaluating public health interventions. He leads the Responsible and Applied Machine Learning Laboratory (RAML Lab) at Brock University, Canada, where he works on advancing ML methodologies and their applications to real-world challenges. Dr. Ogbuokiri collaborates across disciplines to address community-based infectious disease outbreaks, including COVID-19, Malaria, and Mpox.

Konstantina Palla

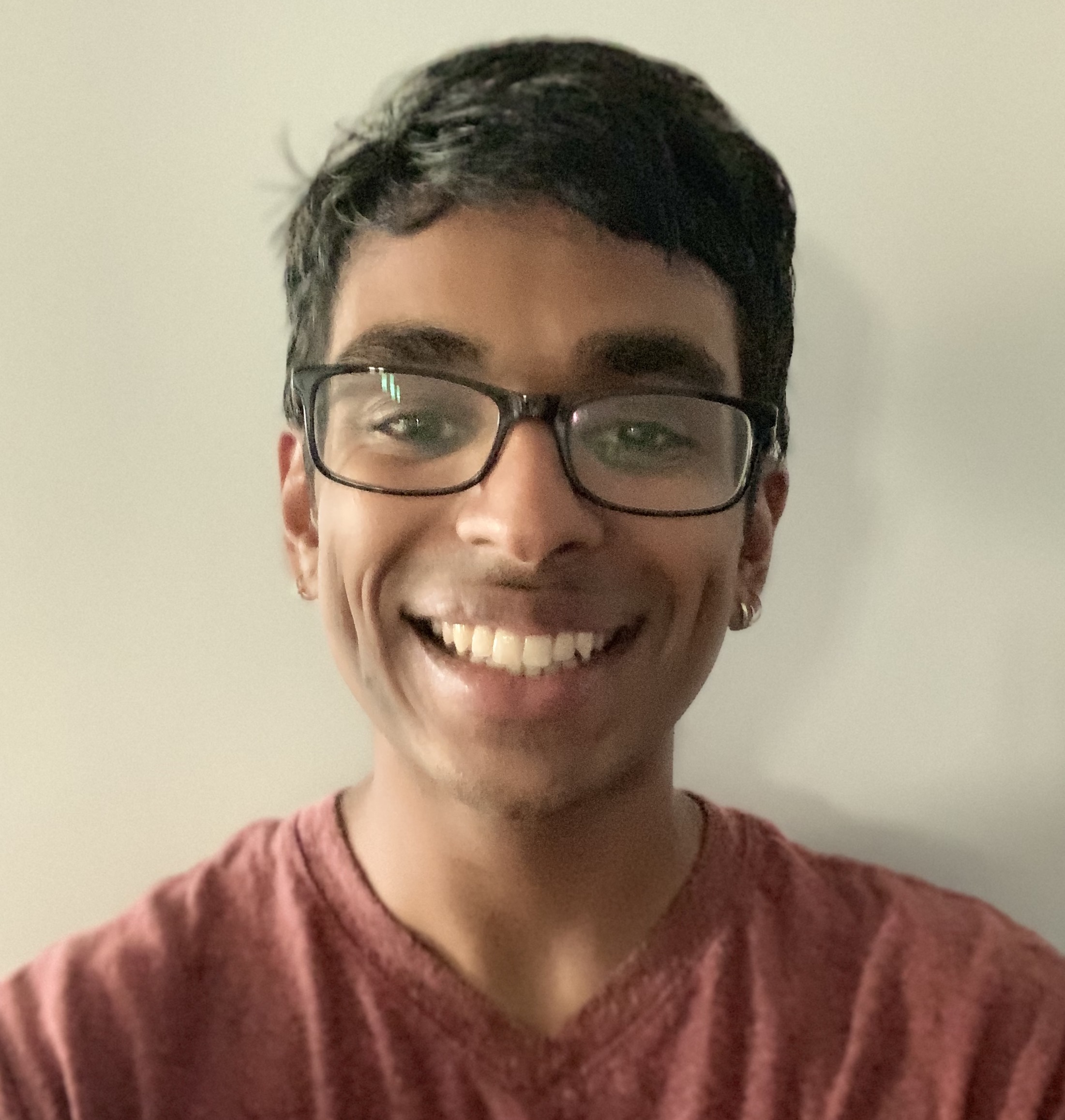

Arjun Subramonian

Arjun Subramonian (they/them) is a Computer Science PhD student at the University of California, Los Angeles. Their research focuses on inclusive graph machine learning and natural language processing, including fairness, bias, ethics, and integrating queer perspectives. They are further a core organizer of Queer in AI.

Erin Grant

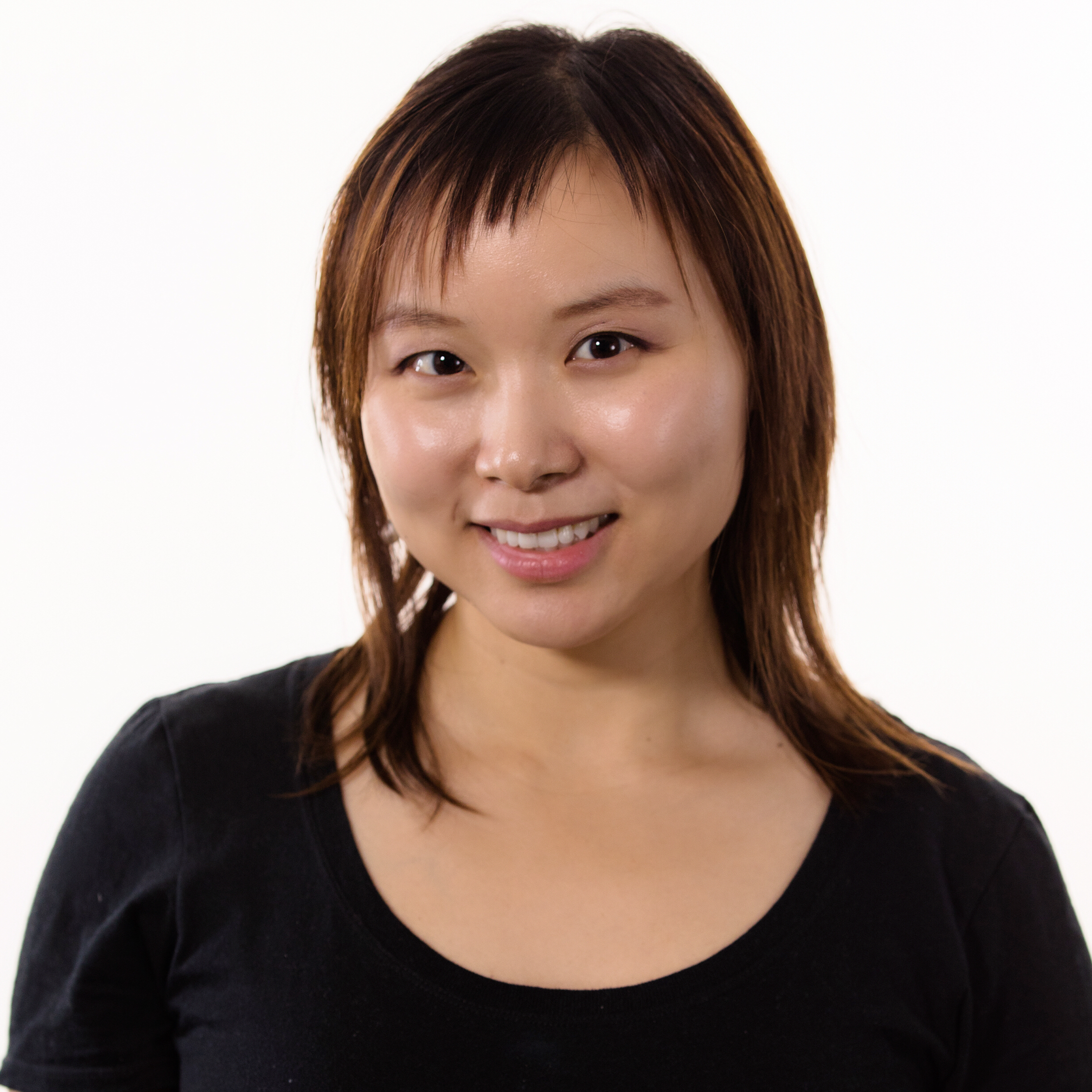

Rosanne Liu

Rosanne Liu is the Co-founder and Executive Director of ML Collective, a non-profit organization providing research training for all, and she is concurrently doing science at Google DeepMind. She was one of the 15 founding members (and the only woman) of Uber AI Labs. Rosanne obtained her PhD in Computer Science at Northwestern University, and has published well-cited research at NeurIPS, ICLR, ICML, Nature and other top venues. She builds communities for underrepresented and unprivileged researchers, organizes symposiums, workshops, and a long-running weekly reading group “Deep Learning: Classics and Trends.” She serves as the Diversity, Equity & Inclusion chair of ICLR 2022-2024, and NeurIPS 2023.

Venkatesh Saligrama

Wenming Ye

Edward Choi

Edward Choi is an assistant professor in Kim Jaechul AI Graduate School of KAIST. He received his PhD in Georgia Tech in 2018 under the supervision of Dr. Jimeng Sun, focusing on interpretable deep learning methods for handling medical records. Prior to joining KAIST, Edward had engineering and research experience in ETRI, Sutter Health, DeepMind, and Google. His current research interest covers machine learning for healthcare, natural language processing and multi-modal learning.

Jihun Hamm

Dr. Jihun Hamm has been an Associate Professor of Computer Science at Tulane University since 2019. He received his PhD degree from the University of Pennsylvania in 2008 supervised by Dr. Daniel Lee. His research interest is in machine learning, from theory and to applications. He has worked on the theory and practice of robust learning, adversarial learning, privacy and security, optimization, and deep learning. He also has a background in biomedical engineering and has worked on machine learning applications in medical data analysis. His work in machine learning has been published in top venues such as ICML, NeurIPS, CVPR, JMLR, and IEEE-TPAMI. His work has also been published in medical research venues such as MICCAI, MedIA, and IEEE-TMI. Among other awards, he has earned the Best Paper Award from MedIA, Finalist for MICCAI Young Scientist Publication Impact Award, and Google Faculty Research Award.

Jiahao Chen

I am the Director of Artificial Intelligence and Machine Learning at New York City's Office of Technology and Innovation. I am responsible for work related to the city's AI Action Plan, being New York City's public commitment to lead the responsible use of innovative artificial intelligence (AI) technology, particularly at the level of local governments. I bring my years of experience in global AI governance and AI risk management to the role, having previously founded Responsible AI LLC, a startup, and expertise in ML for financial services and academic research.

I remain active in academic research and am published in leading venues. I am currently an Ethics Chair of the NeurIPS machine learning conference and Area Chair for the ACM FAccT conference on Fairness, Accountability and Transparency.

Lester Mackey

Cherie Poland

Applied Complex Systems Engineering

Amir Bar

Amir Bar is a fourth-year Ph.D. candidate at Tel Aviv University and a Visiting Ph.D. Researcher at UC Berkeley, advised by Amir Globerson and Trevor Darrell. His primary research area centers around self-supervised learning and how to use large amounts of unlabeled images and videos to enable computers to develop visual understanding. Lately, his focus has been on improving learning algorithms for Masked Image Modeling and Visual Prompting, which involves adapting computer vision models during test time for novel computer vision tasks without changing the model weights or task-specific fine-tuning.

Kuan Fang

Kuan Fang is a postdoctoral scholar in the Department of Electrical Engineering and Computer Sciences at UC Berkeley. He received his Ph.D. degree in Electrical Engineering from Stanford University. His research interest lies in robotics, computer vision, and machine learning. He received his bachelor's degree from Tsinghua University and has spent time at Google Brain, X Robotics, and Microsoft Research Asia. He is a recipient of the Stanford Graduate Fellowship and the Computing Innovation Fellowship.

Ping Hu

Ping is a postdoctoral researcher in the IVC group at Boston University. He is working on fast computer vision models, data-efficient learning, and open-world perception.

Irene Chen

Irene Chen an Assistant Professor in UC Berkeley and UCSF’s Computational Precision Health program with a joint appointment in Berkeley EECS. She received her PhD from MIT EECS as a member of the Clinical Machine Learning group. She received a joint AB/SM degree from Harvard University, and she has worked at Dropbox and Microsoft Research.

Sahra Ghalebikesabi

I am a Research Scientist at Google DeepMind. I have obtained my PhD from the University of Oxford, supervised by Chris Holmes. I have interned at DeepMind London and Microsoft Research Cambridge, and my research has also received the Microsoft Research PhD Fellowship. My research focusses on generative modelling for robustness, differential privacy and interpretability.

Alex X Lu

Alex Lu is a Senior Researcher at Microsoft Research New England, in the BioML group. His research focuses on machine learning methods that enable biologists to discover new hypotheses from big biological datasets. Facets of his research program include self-supervised representation learning towards the goal of characterizing previously unknown classes or patterns, foundation models towards the goal of making machine learning accessible to biologists working with more restricted datasets, and robustness and domain generalization to ensure that methods detect biological signal and not technical confounders.

Animesh Garg

Animesh Garg is a Stephen Fleming Early Career Professor at the School of Interactive Computing at Georgia Tech and holds courtesy appointments at University of Toronto and Vector Institute. Animesh is also a Senior Researcher at Nvidia Research. Animesh earned a Ph.D. from UC Berkeley and was previously a postdoc at the Stanford AI Lab. He leads the People, AI, and Robotics (PAIR) research group. His work aims to build Generalizable Autonomy which involves a confluence of representations and algorithms for reinforcement learning, control, and perception — all in the purview of embodied systems.

Alessandra Tosi

Lam Nguyen

Lam M. Nguyen is a Staff Research Scientist at IBM Research, Thomas J. Watson Research Center working in the intersection of Optimization and Machine Learning/Deep Learning. He is also the PI of ongoing MIT-IBM Watson AI Lab projects. Dr. Nguyen received his B.S. degree in Applied Mathematics and Computer Science from Lomonosov Moscow State University in 2008; M.B.A. degree from McNeese State University in 2013; and Ph.D. degree in Industrial and Systems Engineering from Lehigh University in 2018. Dr. Nguyen has extensive research experience in optimization for machine learning problems. He has published his work mainly in top AI/ML and Optimization publication venues, including ICML, NeurIPS, ICLR, AAAI, AISTATS, Journal of Machine Learning Research, and Mathematical Programming. He has been serving as an Action/Associate Editor for Journal of Machine Learning Research, Machine Learning, Neural Networks, IEEE Transactions on Neural Networks and Learning Systems, and Journal of Optimization Theory and Applications; an Area Chair for ICML, NeurIPS, ICLR, AAAI, CVPR, UAI, and AISTATS conferences. His current research interests include design and analysis of learning algorithms, optimization for representation learning, dynamical systems for machine learning, federated learning, reinforcement learning, time series, and trustworthy/explainable AI.

Isabelle Guyon

Isabelle Guyon recently joined Google Brain as a research scientist. She is also professor of artificial intelligence at Université Paris-Saclay (Orsay). Her areas of expertise include computer vision, bioinformatics, and power systems. She is best known for being a co-inventor of Support Vector Machines. Her recent interests are in automated machine learning, meta-learning, and data-centric AI. She has been a strong promoter of challenges and benchmarks, and is president of ChaLearn, a non-profit dedicated to organizing machine learning challenges. She is community lead of Codalab competitions, a challenge platform used both in academia and industry. She co-organized the “Challenges in Machine Learning Workshop” @ NeurIPS between 2014 and 2019, launched the "NeurIPS challenge track" in 2017 while she was general chair, and pushed the creation of the "NeurIPS datasets and benchmark track" in 2021, as a NeurIPS board member.

Jean Oh

Jean Oh is an Associate Research Professor at the Robotics Institute at Carnegie Mellon University and the Director of roBot Intelligence Group (BIG). Jean’s current research is focused on developing high-level intelligence for robots in the domains of autonomous navigation and creativity. Jean’s long-term brainchild, FRIDA, a reference to the vibrant painter Frida Kahlo, stands for a Framework and Robotics Initiative for Developing Arts to promote human creativity and real-world arts. FRIDA supports intuitive and accessible ways for people to collaboratively create artworks including natural language, images, and sounds. Jean’s work has won several best paper awards at IEEE International Conference on Robotics and Automation (ICRA) and IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) and has been featured in various media around the world including New York Times, The Telegraph, Petit Quotidien, Australian Broadcasting Corporation (ABC), and Korean Broadcasting Systems (KBS).

Hendrik Strobelt

Zhenyu (Sherry) Xue

Talha Irfan

Terri Auricchio

Brad Brockmeyer

Lee Campbell

Brian Nettleton

Mary Ellen Perry

Max Wiesner